Every fortnight or so we’ll bring you some technical updates that we feel you’ll find useful.

Today’s topics are the latest Privacy Sandbox updates, what’s happening with Buy-Side Transparency Standards & some updates for Webmasters on the latest Google News and Google Discover guidelines for search.

Buy-Side Transparency Standards

Buy-side transparency standards are now being developed by the IAB Tech Lab, due to be released for public commentary and feedback sometime in Q2 2021.

The concept is buyers.json, which is aimed at bringing transparency to the buy-side in the same manner that current standards such as sellers.json and ads.txt have enabled transparency on the sell-side.

Full disclosure of buyer names can help to solve two major issues for our industry:

• It supports identification of malvertisers and other threat actors across DSPs

• It facilitates blocking of identified threat actors across DSPs

The buyers.json standard will largely mirror the existing sellers.json spec, with fields for buyer ID, buyer name, and buyer type. The buyer ID would match the ID that appears in the bidresponse.seatID property in OpenRTB and the proposed DemandChain object, allowing these values to easily be tied together. The buyer type attribute would indicate whether the buyer is acting as an advertising or an intermediary.

DSPs will post their buyers.json file on their root domain and as the file is in a simple, human-readable format, adops and compliance teams trying to pinpoint the source of any attack will be able to benefit from the information in these files without the support of APIs, complex tools, or highly technical staff.

More sophisticated parties such as SSPs will be able to crawl these files on their own schedule and eliminate manual processes for exchanging buyer information with DSPs.

However, the forthcoming buyers.json standards is the first key step in enabling full buy-side transparency, and will be the foundation upon which future initiatives can be built. By solving the problem of cross-platform buyer identity, buyers.json will allow the industry to turn its attention to fresh efforts such as:

- DemandChain, a new object within OpenRTB that would allow sellers to see all parties that were involved in buying the creative. Just as buyers.json serves as the counterpart to sellers.json, DemandChain is envisioned as the buy-side complement to the SupplyChain.

- Standardising methods for disclosing buyer information on the client-side and through header-bidding frameworks like Prebid. In an ideal world, every creative would have a ‘calling card’ that clearly identifies who bought inventory and who delivered the ad to the page. With this information in hand, buyers.json would be the directory to which a publisher turns to look up the buyer and connect it to specific accounts across DSPs.

- Creating a centralised registry of buyers and exchange mechanism for reputation signals.

- Simple, standardised ways to manage and communicate ad quality preferences across SSPs.

- An industry certification program for ad servers.

More information will follow next quarter and we’ll be inviting some of our members to both test and contribute to these standards in collaboration with IAB Tech Lab’s Supply Chain Working Group.

Privacy Sandbox Updates

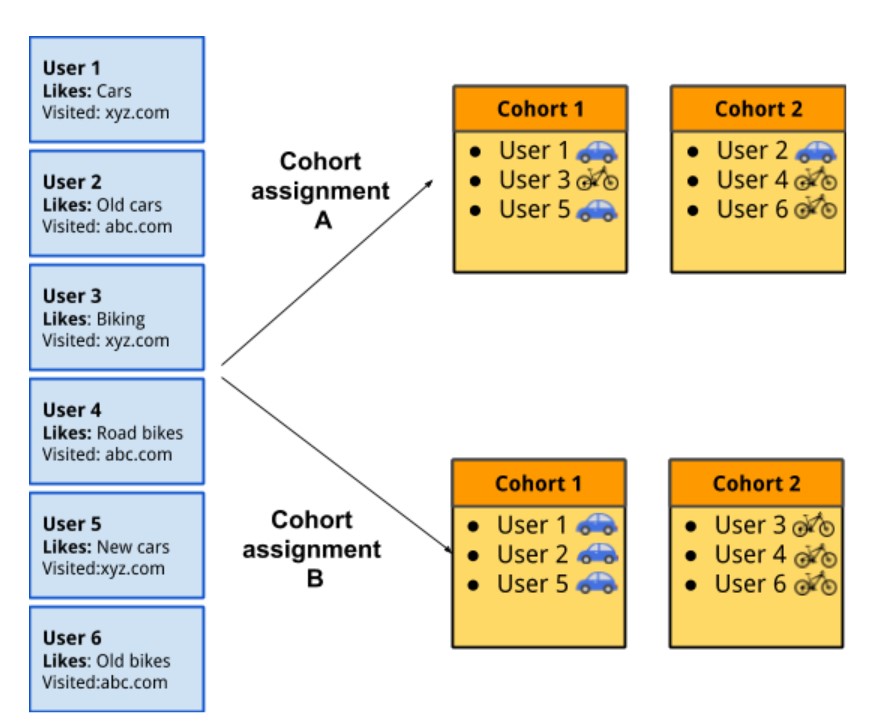

Since Google released it’s Privacy Sandbox initiative a series of proposals have been received and suggested allowing for publishers and marketers to manage and ultimately match consensual users into pre-determined interest groups, or cohorts, without the use of any trackable user IDs. Thereafter there have been a number of different proposals and iterations related to where these data sets will physically reside, whether the data can continue to successfully and safely feed into any machine-learning decisioning and modelling, and where the advertising auctions could physically take place.

Some suggestions proposed that these would all be in-device/browser and others are suggesting they be hosted directly by certified publishers or else hosted by trusted and/or benevolent independent third-parties. Below is a quick reference guide to the core and most recent updates and proposals seen in this space to help sustain measurement and targeting of programmatic-driven media once the use of third-party cookies is negated. We’ll be running some webinars and Q&A’s on this topic over the coming months, worry not.

TURTLEDOVE (Two Uncorrelated Requests, Then Locally-Executed Decision On Victory ) – a building block of the sandbox proposal, which proposes separating any interest groups (cohorts) from any contextual data and publisher-provided IDs when executing a client-side programmatic auction.

FLoC (Federated Learning of Cohorts) – FloC proposes a draft API that extends the Chrome browser by providing access to machine learning algorithms to the habits or interests of large numbers of users in order to better develop and enhance the cohorts based upon the user behaviours and sites that an individual visits. For a more detailed explainer click here

FLEDGE (First Locally-Executed Decision over Groups Experiment) – is a proposed public trial (scheduled for later this year) of the next iteration of TURTLEDOVE based upon industry feedback – and interestingly with the opportunity for ad tech companies to bring their own ad server model into the API.

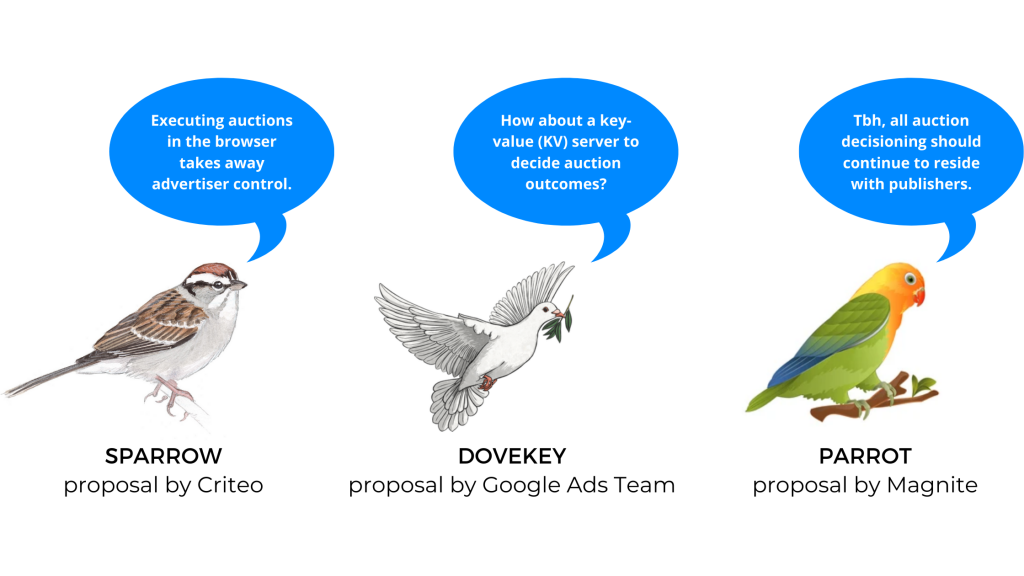

Specific feedback has also been received from various independent AdTech companies and will be incorporated into these ‘origin’ trials including AdRoll’s TERN (TURTLEDOVE Enhancements with Reduced Networking), Criteo’s SPARROW (Secure Private Advertising Remotely Run On Webserver), Magnite’s PARRROT (The Publisher Auction Responsibility Retention Revision of TurtleDove) and Neustar’s PeLICAn (Private Learning and Inference for Causal Attribution).

DOVEKEY – is a modification of TURTLEDOVE built upon Criteo’s SPARROW proposal and tries to reduce the complexity of previous proposals by suggesting the use of key-value pairs to return bids instead of undertaking more complex computations within the browser.

For more information on RTB bidders participating in these types of trials simply click here

For more information on RTB bidders participating in these types of trials simply click here

Updated webmaster guidelines for News and Discover

Websites can now potentially receive a manual penalty for violating Google News and Google Discover policies, the updated guidelines of which can also impact how users find, index, and rank sites.

More information is available here and below is a summary.

Basic principles:

- Make pages primarily for users, not for search engines.

- Don’t deceive your users.

- Avoid tricks intended to improve search engine rankings.

Google Discover Penalties:

There are two manual penalties specific to Google Discover:

- Adult-themed content : Google has detected content that contains nudity, sexual acts, sexual activity, or sexually content explicit.

- Misleading content: Google has detected content that appears to be misleading users by promising a topic or story that is not reflected in the content.

Google News and Google Discover Penalties:

There are nine manual penalties for violation of the rules shared between Google News and Google Discover.

- Dangerous content : Google has detected content that could cause serious and immediate harm to people or animals.

- Harassing content : Google has detected content that contains harassment, bullying or threatening content.

- Hateful Content : Google has detected content that incites hatred.

- Media manipulated : Google has detected audio, video or image content that has been manipulated to deceive, defraud or mislead.

- Medical content: Google has detected content intended to provide medical advice, diagnosis or treatment for commercial purposes.

- Sexually Explicit Content: Google has detected content that contains images or videos of an explicit sexual nature primarily intended to induce sexual arousal.

- Terrorist content: Google has detected content that promotes terrorist or extremist acts, including recruiting, inciting violence or celebrating terrorist attacks .

- Violence and gory content: Google has detected content that incites or glorifies violence. Google does not allow extremely graphic or violent content in order to disgust others.

- Vulgar language and profanity: Google has detected content containing obscenities or profanity free of charge.

Google warns that if your website violates one or more of these guidelines, then they may take manual action. However, once you have remedied the problem – you can submit your site for reconsideration.

For more information on this process please click here.